In May 2024, we published an article about GPT-4o and what it meant for businesses adopting AI. At the time, GPT-4o was the frontier — a single model that defined what modern AI could do. Two years later, that framing feels like ancient history. Consequently, this follow-up lays out where frontier AI models in 2026 actually stand, what changed since the GPT-4 era, and what the shift means for decision-makers planning AI investments today.

What Two Years Changed

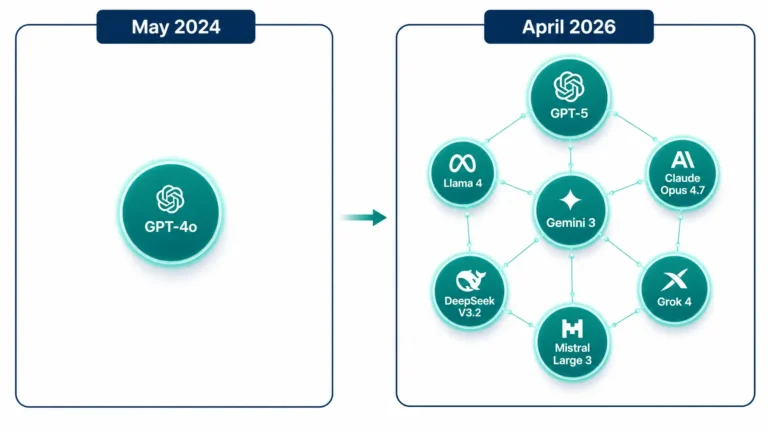

In 2024, AI adoption conversations usually started with one question: should we use GPT-4o, or wait? By 2026, that question barely makes sense. The frontier is no longer a single model. Instead, it is a set of roughly six or seven competing families from OpenAI, Anthropic, Google, Meta, DeepSeek, Mistral, and xAI — each with distinct strengths. Meanwhile, the capabilities that made GPT-4o impressive in 2024 are now table stakes. For example, multimodal input, voice conversation, and 128K-token context windows are baseline features across the field.

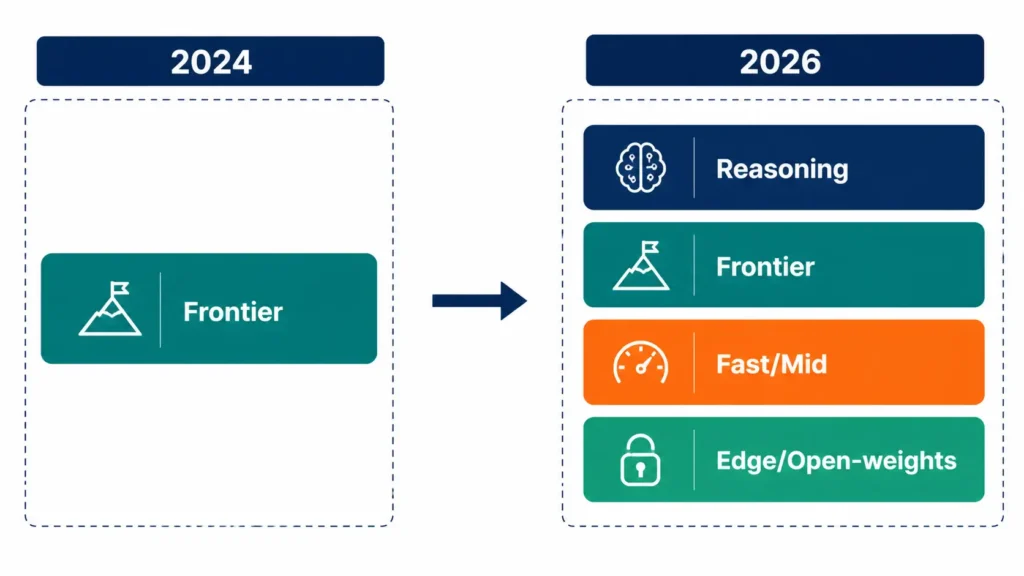

More importantly, a capability tier exists today that did not exist at all in 2024—specifically, the reasoning model categorization. Introduced by OpenAI’s o-series and matched by Anthropic’s extended thinking, it has become essential for complex analytical work. As a result, the 2024-style question “which model should we use?” has become “which tier of which family for which task?”

The Frontier in 2026

As of April 2026, the list of models operating at a genuine frontier level is short but diverse. Anthropic’s Claude Opus 4.7 leads on complex reasoning and multi-file code work. OpenAI’s GPT-5 family, with GPT-5.2 and GPT-5.3 variants, covers general-purpose flagship use alongside the o-series reasoning models. Google’s Gemini 3 Pro has become competitive across coding and agent workflows. Meta’s Llama 4 Scout and Llama 4 Maverick brought serious open-weight capability into the picture. While DeepSeek V3.2 and Mistral Large 3 round out the viable enterprise options.

Notably, xAI’s Grok 4 and Grok 4.1 Fast also made the frontier list this year. Consequently, instead of one reference model, serious teams evaluate at least three or four before committing. For a detailed comparison of the model families, including pricing and benchmark scores, see our AI Model Selection Guide.

The Reasoning Era

The biggest qualitative shift from the GPT-4 era is the emergence of reasoning models. Specifically, these are models that spend substantial compute thinking through a problem before generating the final answer. OpenAI’s o3 and o4-mini pioneered the pattern in late 2024; by 2026, every major provider had a reasoning-tier offering. Claude’s extended thinking and Gemini’s Deep Think mode operate on the same principle. More tokens spent on internal deliberation produce dramatically better results on complex problems.

The practical impact is substantial. For instance, tasks that seemed out of reach two years ago — complex refactoring across multi-file codebases, multi-step research synthesis, rigorous data analysis — can now be accomplished with reasoning models. However, reasoning tokens cost more. Therefore, the 2026 pattern is clear: use the standard tier for routine work and the reasoning tier when the task demands it. Reasoning for everything burns through the budget without adding value.

Pricing Has Inverted

In 2024, premium API access costs roughly $2.50 per million input tokens and $10 per million output tokens, with reasonable options at similar pricing. By 2026, the landscape will have split into two very different price bands. Top-tier frontier models now cost $3 to $5 per million input tokens and $15 to $25 per million output tokens. Meanwhile, capable mid-tier and edge options have dropped to $0.14–$0.50 per million input tokens — a 10 to 50 times drop at the low end.

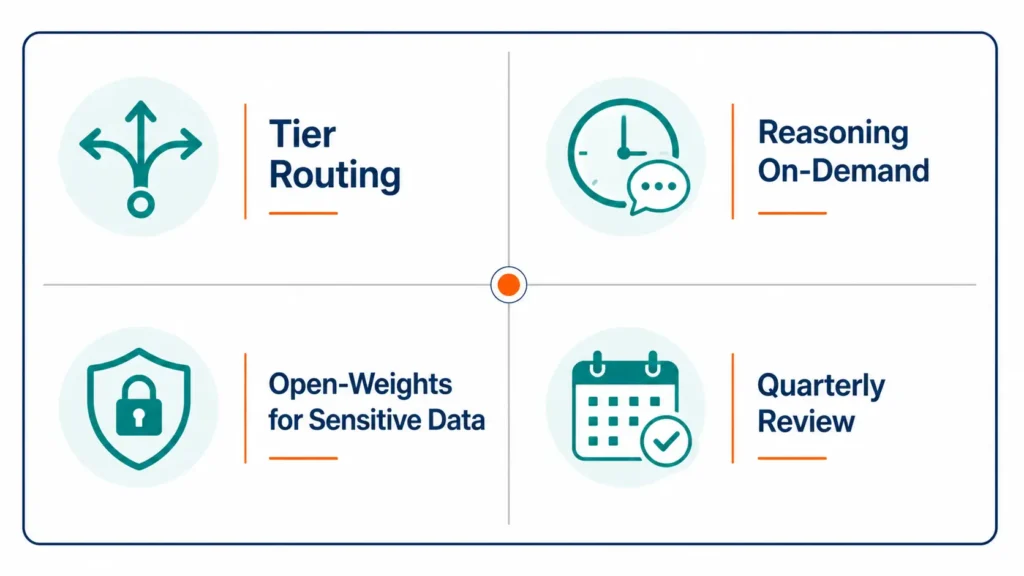

This inversion matters for architecture. Specifically, the 2026 pattern for cost-sensitive products is tiered routing: route simple requests to low-cost models, and escalate to the frontier only when necessary. In addition, prompt caching (90% savings on cached input tokens) and batch processing (50% off) have become standard cost-engineering tools. For the detailed playbook, see our Reducing AI Costs guide for specific techniques.

Open-Weights Caught Up

In 2024, open-weights models were a fallback option — capable, but clearly behind the proprietary frontier. By 2026, the gap has narrowed to the point where a self-hosted Llama 4 Maverick or Mistral Large 3 deployment can match many closed-model use cases at a fraction of the per-token cost. Notably, DeepSeek V3.2 delivers strong reasoning performance at prices even the commercial providers cannot match via API.

The practical question for businesses in 2026 is no longer “open or closed” but “where should each workload live?” Specifically, sensitive data might live on a self-hosted open-weights deployment while customer-facing workflows use a commercial API for quality and support. For teams weighing this decision, our Self-Hosted vs API guide walks through the trade-offs in depth.

What This Means for Your Business

First, stop treating AI adoption as a single-vendor decision. The 2026 frontier rewards teams that evaluate two or three options per use case rather than defaulting to whatever was current at project start. Second, an architect for tier routing from day one. Specifically, a thin routing layer that selects the right model per request pays for itself within a quarter for any application handling more than a few thousand calls per day.

Third, reserve reasoning tiers for tasks that genuinely need them. Those tokens cost real money. As a result, the teams getting the most value in 2026 use reasoning for five to fifteen percent of their traffic — not everything. Fourth, review your model choices quarterly. The 2024 question, “Which model should we standardize on?” is the wrong question in 2026. Rather, the right question is “when did we last check whether our choices still make sense?”

How Pegotec Stays Current

We rebuild our internal frontier-model benchmarks quarterly, covering our actual workload mix — code assistance, content generation, support triage, and multi-step agents. Consequently, when we recommend a model choice to a client. The recommendation is grounded in last-quarter data rather than vendor marketing. In addition, we keep at least one open-weights deployment running in parallel to the commercial API track, which gives our teams real comparative data on cost and quality.

If you are planning an AI-enabled product in 2026 and want an honest read on which tier and which provider fits your specific use case, contact Pegotec for a no-obligation consultation. Furthermore, for the current head-to-head model details, our AI Model Selection Guide stays up to date with pricing and benchmark shifts.

Conclusion

Frontier AI in 2026 is not one model. Rather, it is a set of competing families, each strongest at different tasks, priced across two distinct bands, with reasoning as a first-class capability tier that did not exist when GPT-4o shipped. In short, the 2024-era question “which AI?” has become the 2026 question “which tier of which provider for which workload?”. Above all, the teams winning with AI in 2026 treat model selection as a quarterly decision informed by their own data — not a one-time standardization locked in at project start.

Frequently Asked Questions

GPT-4o remains available and capable for many tasks. However, GPT-5 variants and the current Claude, Gemini, and Grok flagships outperform it in complex reasoning, long-context work, and multi-step agent tasks. For cost-sensitive workloads, newer mid-tier models priced at $0.14 to $0.50 per million tokens typically match or exceed GPT-4o quality at a fraction of the price. In short, it is no longer the default choice.

Reasoning models spend additional compute time thinking through a problem before responding. Examples include OpenAI’s o-series, Claude with extended thinking, and Gemini Deep Think. Specifically, they excel at complex refactoring, multi-step analysis, and rigorous synthesis. However, they cost substantially more per call. Therefore, use them for the five to fifteen percent of your workload that genuinely needs careful deliberation — not for routine requests, a standard model handles fine.

Standardizing on one model is the wrong frame for 2026. Instead, pick a primary flagship for complex work (Claude Opus 4.7, GPT-5, or Gemini 3 Pro are all defensible) and a cheap mid-tier model for routine requests. Then route traffic per task. Specifically, teams that evaluate quarterly and route intelligently consistently outperform teams that lock in one vendor at project start.

For many workloads, yes. Llama 4 Maverick, Mistral Large 3, and DeepSeek V3.2 now match commercial API quality on a broad range of tasks at a fraction of the per-token cost. However, the absolute frontier — complex reasoning, long-context agent work — still sits slightly ahead with the top commercial models. Consequently, the 2026 pattern for most teams is hybrid: self-host open-weights for sensitive or high-volume work, use the commercial frontier for tasks that truly need it.

Quarterly at a minimum. Model capabilities, prices, and benchmark leadership change meaningfully every three to six months. In practice, a 30-minute quarterly review of your top one or two workloads. Comparing your current model against two alternatives reliably surfaces either cost savings or quality improvements. In short, AI model selection is no longer a one-time decision.

Let's Talk About Your Project

Enjoyed reading about From GPT-4o to GPT-5: Where Frontier AI Stands in 2026? Book a free 30-minute call with our consultants to discuss your project. No obligation.